Meta fails to detect AI-created fake ads inciting religious violence

Experiment shows Meta approves fake ads based on real hate speech during ongoing election campaign, despite pledge to the contrary

Meta — the parent company of Facebook and Instagram — approved an experimental series of AI-manipulated political advertisements during India’s ongoing Lok Sabha election that spread disinformation and incited religious violence, says a report shared exclusively with the Guardian UK.

According to the report, all the adverts were created as part of an experiment "based upon real hate speech and disinformation prevalent in India, underscoring the capacity of social media platforms to amplify existing harmful narratives”.

Facebook approved adverts containing known slurs toward Muslims in India, such as “let’s burn this vermin” and “Hindu blood is spilling, these invaders must be burned”, as well as Hindu supremacist language and disinformation about political leaders.

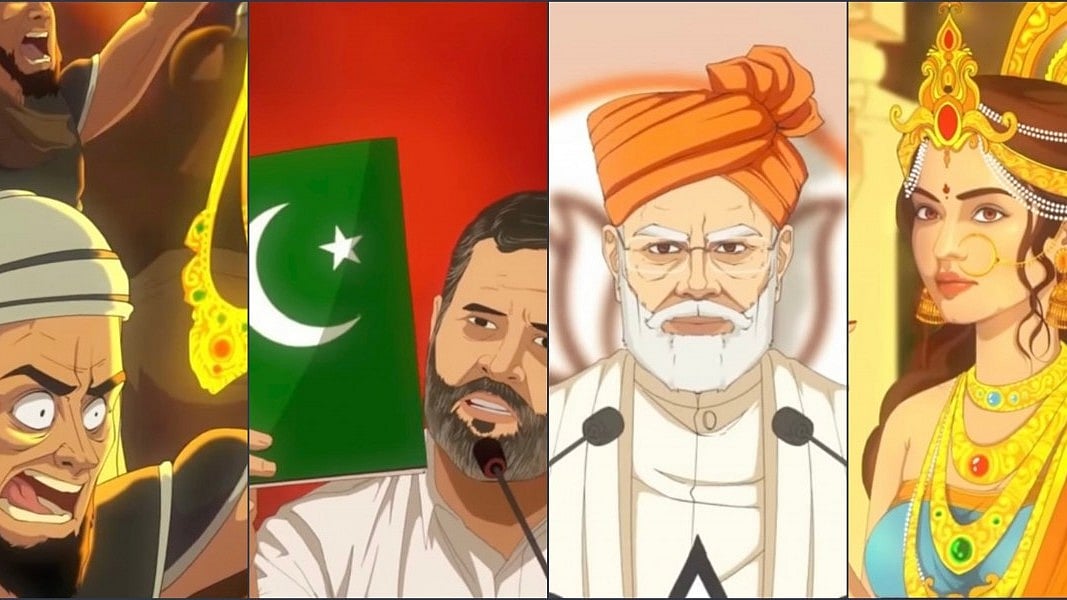

Another approved advert called for the execution of an opposition leader who Hindu supremacists falsely claimed wanted to “erase Hindus from India”, next to a picture of a Pakistan flag.

The adverts were created as a test case and submitted to Meta’s ad library — the database of all ads on Facebook and Instagram — by India Civil Watch International (ICWI) and Ekō, a corporate accountability organisation, to test Meta’s mechanisms for detecting and blocking political content that could prove inflammatory or harmful during India’s six-week election.

However, the adverts were submitted midway through voting, which began in April and will continue in phases until 1 June. The election will decide if prime minister Narendra Modi and his Hindu nationalist BJP government will return to power for a third term.

During his decade in power, Modi’s government has pushed a Hindu-first agenda which human rights groups, activists and opponents say has led to the increased persecution and oppression of India’s Muslims, the country's largest minority religious group.

As part of its election campaign, the BJP has been accused of using anti-Muslim rhetoric and stoking fears of attacks on Hindus, who make up 80 per cent of the population, to garner votes. During a rally in Rajasthan, Modi referred to Muslims as “infiltrators” who “have more children”, though he later denied this was directed at Muslims and said he had “many Muslim friends”.

He also stated that “the day I start talking about Hindu-Muslim (in politics) will be the day I lose my ability to lead a public life.” However, an animated video shared by the BJP’s official social media handles on 30 April which directly named and targeted Muslim Indians was removed on 1 May.

Meta’s systems failed to detect that all the approved adverts featured AI-manipulated images, despite a public pledge by the company that it was “dedicated” to preventing AI-generated or manipulated content being spread on its platforms during the Indian election.

Meta's transparency centre states: 'Any advertiser running ads about social issues, elections or politics who is located in or targeting people in designated countries must complete the authorisation process required by Meta, except for news publishers identified by Meta. This applies to any ad that:

Is made by, on behalf of or about a candidate for public office, a political figure, a political party, a political action committee or advocates for the outcome of an election to public office

Is about any election, referendum or ballot initiative, including "get out the vote" or election information campaigns

Is about any social issue in any place where the ad is being run

Is regulated as political advertising

Five of the adverts were rejected for violating Meta’s community standards policy on hate speech and violence, including one that featured misinformation about Modi.

But the 14 that were approved, which largely targeted Muslims, also “broke Meta’s own policies on hate speech, bullying and harassment, misinformation, and violence and incitement”, according to the report.

Maen Hammad, a campaigner at Ekō, accused Meta of profiting from the proliferation of hate speech. “Supremacists, racists and autocrats know they can use hyper-targeted ads to spread vile hate speech, share images of mosques burning, and push violent conspiracy theories — and Meta will gladly take their money, no questions asked,” he said.

Meta also failed to recognise that the 14 approved adverts were political or election-related, though many took aim at political parties and candidates opposing the BJP. Under Meta’s policies, political adverts have to go through a specific authorisation process before approval, but only three of the submissions were rejected on this basis.

Also Read: Nehru's Word: The dangers of communal hatred

This meant these adverts could freely violate India’s election rules, which stipulate all political advertising and political promotion is banned in the 48 hours before polling begins and during voting. These adverts were all uploaded to coincide with two phases of election voting.

The report states: "During the election "silence period," which lasts 48 hours before polling begins and extends until voting concludes in each of India’s seven phases, India's Election Commission prohibits any individual or group posting advertisements or disseminating election materials. Despite the regulations outlined in India's Model Code of Conduct (MCC), reports have shown that political parties, and especially the ruling Bharatiya Janata Party (BJP) and far-right networks aligned with the party, have failed to comply."

Meta has a questionable history when it comes to right-wing political propaganda as seen in the Cambridge Analytica scandal, the data firm principally owned by right-wing donor Robert Mercer. The scam and subsequent investigation proved that the firm, where former Donald Trump aide Stephen K. Bannon was a board member, used data improperly obtained from Facebook to build voter profiles. The news put Cambridge under investigation and thrust Facebook into its biggest crisis ever.

Nick Clegg, Meta’s president of global affairs, recently described India’s election as “a huge, huge test for us” and said the company had done “months and months and months of preparation in India”. Meta said it had expanded its network of local and third-party factcheckers across all platforms, and was working across 20 Indian languages.

Hammad said the report’s findings had exposed the inadequacies of these mechanisms. “This election has shown once more that Meta doesn’t have a plan to address the landslide of hate speech and disinformation on its platform during these critical elections,” he said.

“It can’t even detect a handful of violent AI-generated images. How can we trust them with dozens of other elections worldwide?”

Follow us on: Facebook, Twitter, Google News, Instagram, WhatsApp

Join our official telegram channel (@nationalherald) and stay updated with the latest headlines